Concerned you'll be falsely accused of AI? Easily fixed! Don't use it, or if you do declare it.

Don't sign up for it. Cancel it if you have. Uninstall it. Tell everyone that's what you've done. Put up notices about your principled standpoint on your website. Now write without AI. Done.

I’m not interested in the scandal side of how the book “Shy Girl” has had its publishing deal cancelled after revelations about AI use by its author. What I want to talk about is all the pearl-clutching going on: Oh. My. What if I’m accused of using AI! Gasp!!

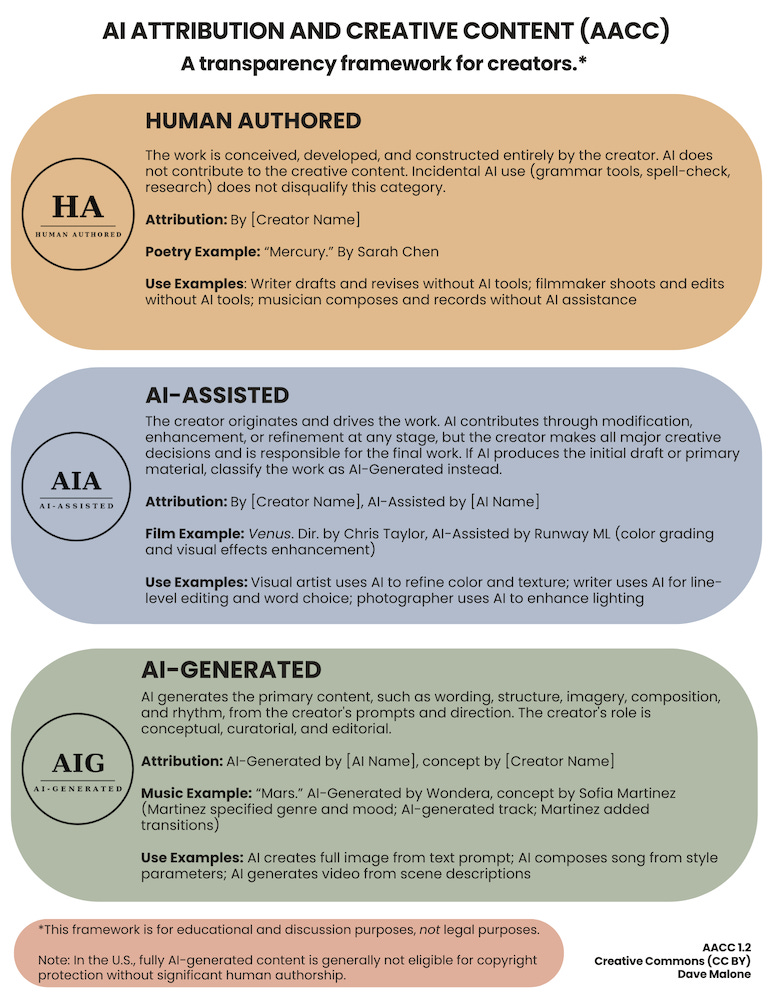

TL;DR - my answer is: don’t write with AI. Get yourself one of these and abide by it:

Or if you do use AI, say so - that way, no cancelled book deals! No scandal!

The Hot Takes on YouTube

Just so you know I’m not making this up, here is an example of the thing I’m talking about in a video about the Ballard thing by YouTuber Alyssa Matesic.

Now Alyssa’s videos are consistently helpful, and she is a hardworking editor. She generally has great advice, which she freely & generously gives with no expectation of payment. She rightly calls out this novel for being AI generated. She rightly says that the author probably did not expect that it would get the attention that it did, but that her claims of not knowing it was AI don’t hold water.

Where I think Alyssa is wrong is the continuing line of pearl-clutching about whether you as an author will be accused of using AI. “It goes far beyond Shy Girl” - “we should be terrified” she says, and blames AI detection software.

Alyssa is good people as far as I know. I’m only using her statement as an example of a wider trend I see: “Oh my - what if I get identified as AI but I’m not”.

It’s like drink-drivers who say “Oh what if I’m falsely detected as a drink driver” when they know they’ve been pushing the line with a few drinks around dinner before driving home.

The Mia Ballard Case

This is getting a lot of publicity right now. But Ballard is just one of lots of folks who think it’s just harmless to steal stuff and claim it came from your creative mind. Here’s the things to know about this:

The identification of “Shy Girl” as AI was 👉👉 done by humans

The rage is not because Ballards work was flagged by AI detection tools

And Alyssa’s video unfortunately implies that this is the case

Ballard also stole the cover art, used AI on that and used AI in the novel

She has confirmed the use of AI herself, so no “allegedly” needed

She also produced a bad novel, according to reports,

which for an obscure self-pub is fine…but…

for Hatchette publishing it is a problem because people feel ripped off

What I want to say today is - just don’t write your books with AI, and be up front about that. Take a stand, and then you won’t have to worry.

Unfortunately there are a ton of AI boosters around still defending “AI Writing”, and promoting the idea that it is just fine to go ahead and be an AI writer. But those boosters are not telling you that you might be the “next Mia Ballard”.

How can you Prove it / You need to have 100% AI Detection / No-one can tell its AI writing

This whole line of thinking, this commentary is utterly wrong-headed, indefensible, blinkered knee-jerk stuff. It’s barking up the wrong tree. Solving fake problem.

In 2008 when criminals added Melamine (a building product) to milk powder in order to fraudulently profit, customers did not know the difference. They got sick anyway - ignorance was no barrier to objective truth. It looked like milk powder. The truth here: that melamine mix is not milk powder!!

The standard for authenticity and provenance is not can some random person tell the difference. That was never the correct standard. Ever.

In 2009 when scammers sold a fake Brett Whitely painting for $1.1m to an investor who thought it was the real thing, there was an objective truth: by provenance it was not a Brett Whitely painting. When suspicions arose he found he was stuck with something worthless.

These questions (prove it/detection tools/no one can tell) are arse-about-face.

There is a simple objective truth: did you write the book, or did you get it in whole or in part from ChatGPT? Is it real or is it AI?

That truth remains, even if someone looks and doesn’t know they’ve been duped. Finding what that truth is can be simpler than you think. In the Ballard case, lots of humans looked at the book and by consensus the truth was discovered, and Hatchette was convinced to scrap the deal. Ballard maintains her innocence, as people do (as the crooks in the art fraud case did) but we can see the outcome. And underlying it is the truth: it’s not real.

The AI Writer Brigade

These are the people trying dozens of different (AI generated) arguments to obfuscate this simple truth of provenance.

These are the really indefensible villains of the piece in my view, along with the AI vendors themselves. They constantly talk up using AI to write, they do it themselves, and yet try to claim victim-hood.

The methodology - claim victimhood, DARVO, tone-policing

Abusers use DARVO: deflect or deny, attack, then claim they are the victim

Its narcissistic, gas-lighting and manipulative behaviour

The actions - use tech oligarch tools to profit

She skites about how much money she is making from it

She runs courses to teach others to do the same

The reality - plagiarism, IP theft

They teach how to create scam books that target keywords on popular books

The scam books are created by ripping off legitimate authors books to train AI

The rip-off works use similar AI covers, similar author names - everything to trap folks looking for the legitimate work by a known author and channel that book revenue into their scam-book-mill operation.

Here’s my response to one of the “AI writers” on Substack, who argued that publishers don’t really care if you write with AI.

On social media, some independent booksellers and small‑press publishers publicly pledge that they will not stock or publish AI‑generated or AI‑assisted books and threaten to drop authors they believe have used such tools.

Meanwhile, the biggest publishers in the world, the ones controlling most of the merchandise sold in those same bookstores, have said very little. No proclamations, prohibitions, or even a clear policy. Just silence.

AI is Fine says “AI Writer”? I call BS on that.

The claim from these folks is “I should not have to state that my work is AI generated, it’s fine to use AI, but I don’t want to say anything because bullying”.

First - Big 5 decide based on $$

The Big 5 publishers don’t care about anything except if your book will sell. If it has big social media presence, great - they’ll take it. They don’t want to talk about AI, they’re interested in if the book is a best seller.

When Shy Girl was mired in controversy and negative press sales went down, therefore trad pub stops selling it. Simple.

When traditional publishing picked up other self-published success-stories; books that broke the rule about already being published to sell Matt Dinniman’s “Dungeon Crawler Carl” books and Andy Weir’s “Martian” series, or Hugh Howey’s “Wool” (the Silo books) they only cared that there was a ready market.

These books are published on the internet first breaking what is supposed to be a hard-and-fast rule of trad pub: you cannot get your book picked up by the Big 5 if you already published it yourself. Those books are not AI, but my example is to show that the Big 5 are literally a sales business. Nothing matters except for selling books.

They didn’t understand what LitRPG was (though they likely do now), or how you grow plants on Mars but they understand $$ and numbers.

Second - KDP cares

The 500lb gorilla. Amazon KDP cares: you must declare if you use AI when you push your book into Amazon, and I guarantee everyone who is writing with AI wants to put their slop onto KDP.

Artificial intelligence (AI) content (text, images, or translations)

We require you to inform us of AI-generated content (text, images, or translations) when you publish a new book or make edits to and republish an existing book through KDP. AI-generated images include cover and interior images and artwork. You are not required to disclose AI-assisted content. We distinguish between AI-generated and AI-assisted content as follows:

AI-generated: We define AI-generated content as text, images, or translations created by an AI-based tool. If you used an AI-based tool to create the actual content (whether text, images, or translations), it is considered “AI-generated,” even if you applied substantial edits afterwards.

AI-assisted: If you created the content yourself, and used AI-based tools to edit, refine, error-check, or otherwise improve that content (whether text or images), then it is considered “AI-assisted” and not “AI-generated.” Similarly, if you used an AI-based tool to brainstorm and generate ideas, but ultimately created the text or images yourself, this is also considered “AI-assisted” and not “AI-generated.” It is not necessary to inform us of the use of such tools or processes.

You are responsible for verifying that all AI-generated and/or AI-assisted content adheres to all content guidelines, including by complying with all applicable intellectual property rights.

Also Ingram Spark cares: you want to self-publish? Everything in print goes through Ingram, so you have to declare to them. You can lie: but then you’re a liar.

You spend a ton of ink and pixels talking up AI, saying how its brilliant then want to refuse to acknowledge you’re using it? I call BS.

Third - Many publishers, awards & booksellers care

Here’s the SFWA - apologising for getting it wrong and coming out against AI generated work being accepted for the Nebula. Most other awards and prizes are the same now.

Here’s Taylor & Francis - pre-eminent technical books publisher: Authors must clearly acknowledge within the article or book any use of Generative AI tools.

Here’s the Australian Publishers Association position:

AI developers and users must be required to declare when a work is wholly or partially AI-generated.

Most of the Big 5 will not accept unsolicited submissions in general and you have to go through agents: they generally will not accept AI generated work.

At present the laws in Australia (where I live and work) are in flux and our Government is teetering on the brink of some very bad decisions.

The main reason I’m writing articles like this one is to contribute to the voices of authors, creators and the book industry in Australia which stands to be killed, and have its corpse looted by AI companies unless IP laws are enforced by the law-makers here.

On the Bullying

These are people out here on Substack promoting AI use. Now they want to shut down criticism. This is narcissism, it’s DARVO. How do you get to promote AI book writing, and then turn around and claim you’re the victim?

They are promoting dangerous, insecure, exploitative technologies that enrich oligarchs, corrupt democracies and are beyond awful on so many levels that I have written a dozen articles on my tech substack about?

On Accessibility

In a huge screed this AI writing booster says:

disabled people need AI to write therefore its OK

theres a blurred line between spell-check and LLMs

This the article’s conclusion is just plain wrong: “access to tools determines who gets to participate” - they’re making an access argument to serve their own defence-of-AI agenda, but infantilising others to achieve it.

This is such a cynical and self-serving take from this AI booster. I’m not going to say any more about this garbage hot-take, as its already been done to death, and it resulted (rightfully in my view) in Nanowrimo being axed:

NanoWriMo defends AI writing and pisses off the whole internet

NanoWriMo statement calls critics of AI ableist and classist

I’m a disabled Writer: Here’s what I think of NanoWriMo’s statement

All this above trying to create room to defend the use of AI is transparently self serving and denigrates folks who genuinely are creative artists and writers with disability.

Side note: I’ve looked through the archive of “Whitney Foster” and see a post introducing themselves. There’s an AI generated portrait on the post, and no mention of any special needs or challenges that this author faces.

On Blurred Lines

The author tries draw a continuum, a blurred boundary, and say here’s some AI that you do accept, therefore you must accept this other (generative) AI.

Google Translate: The transformer architecture was invented by Google Deep Mind researchers who were working on better translation tools. Translating text is generating text. End of story.

Grammarly/ProWritingAid: An ESL or dyslexic person (the authors examples) using Grammarly is generating text. ProWriting aid - the tool that was being promoted by NanoWriMo and sponsored them - exactly the same.

There is no “blurred lines” here. They’re generative AI. In technical circles (I’m a software engineer who writes) I see the same “slippery slope” arguments used, to try to muddy the waters. Defenders of LLM that have been trained on millions of stolen books point to the AI in my iPhone’s camera, or in a piece of medical equipment.

A classic deflection technique from AI scammers & boosters is to demand you

Last time I looked neither my iPhone nor a robot surgeon was trained on millions of stolen books. I don’t see my iPhone camera generating floods of garbage books on Amazon KDP.

Simple rule: are dozens of lawsuits by hundreds of creatives and authors being brought, and fought in the courts against a tech product that is accused of ripping off their work? If yes, then it’s probably generative AI.

On AI in our Computers

The blurred line argument is used by AI boosters and “AI writers” to argue that we are all “sinners” in what they want to call a “purity test". We use computers to type our books, and they have AI in them, so… gotcha.

But that doesn’t get you to a defence of generative AI - which is plagiarism. Specifically if you cut-and-paste from your AI, or ship what AI generated in a book, then you do not know if it is coming straight out of someone else’s work.

Despite what AI companies say, AI repeats and memorises whole tranches of books and if you prompt for something from AI, you can only get what is already in its training set. Penguin Random House is currently suing ChatGPT because it completely copies books:

Old school spelling checkers and grammar checkers in Scrivener, which is what I use are built on part of speech, tokenisers and the other tools in the NLP chain. They are far from perfect but they help.

The line I draw is with NLP - natural language processing technologies. For example TF/IDF is an NLP tool that can - appropriately trained - find “interesting words” in a document corpus. I used this years ago when working on a literature app to process open source novels from Project Gutenberg.

Spelling checkers, grammar checkers, even most NLP does not produce whole tranches of text like the above. More specifically making the confected theoretical argument when no actual cases of this happening exist is just bad faith.

I never use the grammar checker in Scrivener, but I do use the spell checker dictionary.

You can turn off the system level AI in Apple’s MacOS, and in Windows. Once you do that the AI in text boxes won’t appear, and you’re free of AI meddling in your writing.

Internet Search

When researching for a book, every internet search tool has AI suggestions which are hard to defeat. I use Duck-duck-go which is frankly not very good. I use Google when duck duck go isn’t working.

But research - finding stuff - is not writing, and it’s not generating. If you do an internet search, regardless of if what you see is AI generated, or from someone elses blog post when you cut-n-paste from your browser into your manuscript you cross a line.

Got Anything to Declare?

This all brings me back to the core of this article. It’s dead simple. If AI is so fabulous then why not say it? Why not make a clear statement like this:

It’s discussed by an creator called Dave Malone, who is fairly pro-AI as far as I can tell, and who has a guest post on Jane Friedman’s blog where he discusses the framework in a fairly pro-AI way.

There’s no scandal. None of what happened to Mia Ballard would have happened if she had been up front about her use of AI - which remember wasn’t just in the books contents. It would have been a big nothing-burger.

Writers are doing this self declaration already in all sorts of ways. Kester Brewin says he’s doing it because his friends wonder if he uses AI due to his prolific publishing, and wants to self-declare that ‘no’, he just works hard. Fair enough!

The Authors Guild in the USA had so many members wanting to self-declare that they came up with their own trademarked logo and curated program “Human Authored”. I disagree with Jane Friedman in that link about the need to enforce it via an AI detection tool. I think even AI fanatics cannot front the risk of being pilloried by very good writers in the way Mia Ballard has been. There’s no need and no point in having an AI tool.

Why the AI Accusation Angle is So Damaging

The idea that “I need to protect myself from false accusations of AI use” is any kind of valid position needs to be strenuously resisted.

Here’s the fake scenario being painted:

roving bands of anti-AI bullies are combing the internet for victims

these bullies are an anti-AI lynch mob

armed with AI detection software that doesn’t work

waving the software at any book that catches their eye

no-one can be sure the person used AI but they’re blamed anyway

Here’s the reality:

A reader gets a new book, suspects its AI

They discuss it on various forums and compare notes with other readers

Eventually someone may run the book through AI detection software

Consensus and expert opinion develops that its AI

Editors and professionals often spend hours on studying and analysing

There may be direct evidence

Prompts left in

Admissions from the author after fact

As far as I can tell, the whole idea is a case of “Methinks the author protests too much”. In the case of any cheating, the cheats always think they’re going to get away with it, they always have covers planned and protest their innocence. Think Lance Armstrong disgraced Tour de France cyclist who later admitted to doping on Oprah.

People who are up-front about using AI are not the ones being attacked.

And I’m not talking about vague statements that “Oh, I often discuss AI, so you should know that when I write I use AI”.

I am constantly referencing the work of others, linking to where I get information from and crediting images I use. It is not too much to ask to put a line in your work that says you use AI, if that is what you do.

It’s those who try to pass their work off as 100% human generated and then get caught out who are attracting the ire of the reading public.

We only see these complaints by the perpetrators of AI slop that they’re being bullying come after the unacknowledged AI use has been unmasked.

The narrative seems to be that if an “AI author” comes out swinging, attacking those who’ve identified their work as AI, someone will emerge to display some percentage figure from AI detection software. That supports other evidence, research and expert opinion.

AI detection software is like any test for anything. If I test for COVID 19, fraudulent credit card transactions or contributions of global warming the result is supporting evidence.

So when these “AI authors” make claims about iniquitous use of inaccurate AI detection software being used they’re fighting a straw man. Again, Lance Armstrong fought the mounting evidence for years: the doping tests could be wrong, but they indicate we need to investigate.

AI Boosters are Being Critiqued as they should be

I say above that the next Mia Ballard, or those who are “worried about being accused” have nothing to fear because the bullying stuff is fake. There are however glass-jawed AI boosters who are basically the Mikkelson twins types, scammers promoting their AI author services, and talking up using AI to destroy the book world.

In my view those folks - like the one I discuss above - do deserve to have their views critiqued, and strenuously resisted. But that is not the same as attacking a person, and it’s not bullying. There is never a place for abuse of a human being, but their claims, their statements and their actions definitely should be critiqued especially when they support dangerous products that are destabilising democracy, enriching oligarchs and ripping off rights-holders.

Conclusion

Stop promoting the idea that the harmful, sycophantic, democracy-destroying products of the tech oligarchs are fine to use.

Here’s my statement:

I never use generative AI in any of my work. I do not have a login or account of any kind on any LLM vendor’s product or website, and I turned off AI integrations on my computers. I never cut-and-paste AI or any other work from anywhere into my articles, unless I completely attribute the quote or work. All my creative work is completely my own.

Don’t be drawn into the bad faith arguments put forward by the “AI writer” brigade, based on the debunked “blurred lines” argument, the infantilising and harmful access notions, or incorrect information about publisher policies.

Stop defending the actions of those who produce AI works with the goal of trying to pass them off as legitimate human authored books.

Note: do NOT attack the people who produce AI works, don’t denigrate them personally or in any way seek to attract hate to them. They are not the problem, broadly the problem is the generative AI product vendors. Critique their actions, call for regulation and explicitly their statements seeking to diminish their role in using AI. Never attack the person themselves.

We have to avoid being silenced in legitimate critique of AI use in so called “AI writing”. And we can resist the tone-policing and DARVO attacks made by these narcissistic attempts to “be an author” using AI.